这是一个比较lementary blog post to a video from theShopifyDevs YouTube channel. It is the final post from a three-part series created by Zameer Masjedee, a Solutions Engineer with Shopify Plus. See part one,An Introduction to Rate Limits, part two,API Rate Limits and Working with GraphQL, and part three,Implementing API Rate Limits in Your App.

In this post, I’ll continue showing my work in the application in a development store introduced to you in part three of this series,Implementing API Rate Limits in Your App. First, I’ll teach you how to capture rate limit information that is crucial to running your app. Then I’ll walk you through the best practices on how to optimize your app so as to responsibly use your limits.

By the end of this article, you’ll be able to responsibly consume your rate limit, avoid 429 responses, and build a fast, functional app.

Note:All images in this article are hyperlinked to the associated timestamps of the YouTube video, so you can click on them for more information.

How to capture rate limit information

In this section we’re going to go through how to capture all the necessary information you’ll need regarding your rate limit. We’ll begin by showing you how to create your initialrawRequest, and then we’ll walk through the various elements you’ll need to include in your request, including creating the initial structure.

Creating your request

We begin with using arawRequestto capture all that information. It looks like this:

We’re going to sayawait GraphQLClient.rawRequest(productUpdateMutation, productInput.

Formulating yourthenclause

We’re also going to use athenclause, which allows us to wait until this execution is done to do some other business logic. We’ll go over why we’re structuring the query this way a bit below, but for now, know that the skeleton of yourthenclause should look like this:

then(({ errors, data, extensions, headers, status })) => {

}Inside this clause is where we define what needs to happen, first logging the response:console.log(JSON.stringify(data, undefined, 2)). The other thing we want to know is how much API rate limit we still have available. Thelogfor that request looks like this:

console.log(There are ${extensions.cost.throttleStatus.currentlyAvailable} rate limit points available/n)Your completed, finalthenclause should look like this:

It should be nested inside yourawaitclause. So, your wholerawRequestshould look like this:

Now with our extensions we can take a look at the cost to see how much rate limit we still have available. Make sure to save everything, clear your console, and then pressrun.

You can see that when we made the initial request, we had 958 rate limit points left because we were making a query for 10 different products. In order to find the mutations in your terminal, you want to scroll down to just below all that data we got back from our request.

It’s showing us that we started with 969 rate limit points. By the time we made that second mutation, we actually had 975 rate limit points. Then it increased again by seven points, to 982.

This is our leaky bucket algorithm in action. As you might be able to recall, we get 50 more points every second. We’re observing that in the fluctuation of rate limit points available above. In the amount of time it takes us to make the mutation, wait for the response, print the response and make the next mutation, our rate limit is changing.

You might also like:An Introduction to Rate Limits.

Recall that a mutation takes just 10 points. This explains why, in less than a second, we're able to refill our rate limit faster than we're able to use it. This means that in theory I should be able to let this run for as long as I have products available and I'm not going to eat into all of my rate limit. It will not run into a rate limit error if it's able to constantly refill it faster than it's able to consume it.

The only problem with this structure is that while you're not wasting your rate limit, you’re also not optimizing it either. Shopify gives you 1,000 rate limit points—use it! That's how you can operate your app as efficiently as possible.

"Shopify gives you 1,000 rate limit points—use it! That's how you can operate your app as efficiently as possible."

Build apps for Shopify merchants

Whether you want to build apps for the Shopify App Store, offer custom app development services, or are looking for ways to grow your user base, the Shopify Partner Program will set you up for success. Join for free and access educational resources, developer preview environments, and recurring revenue share opportunities.

Sign upOptimizing by removing yourawaitclause

One thing we can do to achieve this is to remove theawaitfrom ourrawRequest.

When working with Node.js, theawaitallows us to make an asynchronous request that has some sort of future orPromiseassociated with it. There's something that this request will ultimately return. By using theawaitcommand, we’re saying whether we want to wait for that to complete or not.

If we chose not to use it, ourrawRequestcould execute as fast as we iterate through theforloop, making multiple asynchronous requests in a row. That would definitely consume your API rate limit faster.

We’re going to take a look at that in action, but before doing so we need to make some changes to ourrawRequest. To implement best practices, we’re going to add acatchclause at the bottom of ourrawRequestbecause we’re expecting an error, and we want to log that error. It should look like this:

The other thing we want to change is the number of products we’re querying for. Instead of trying to retrieve 10 products at once, we’re going to make the request to retrieve 100 products at once.

一旦你做出了这些调整,你想save it, clear your terminal, andrunit again.

Hitting your rate limit

应该有d be quite a few changes happening in your terminal. You won’t be able to see the entire history, but you should see a number of errors, some requests being made, and finally the rate limit points that are left.

Now, clearly if we’re running into errors this method doesn't work well. This isn’t good production level code. We’ll need to put some safeguards in place to prevent those errors.

However, what it does do is bring our rate limit down to three. It eats up all of that 1,000, and that's a good thing. It's consuming as much as it can. But the problem is that it's consuming more than it should.

We need to find a way to optimize it, without going over our rate limit. That's what we're going to do next.

Optimizing your rate limit by pairing synchronous and asynchronous requests

One way to optimize your rate limit is by pairing up a sequence of synchronous and asynchronous requests. Every time we go through ourforloop, we don't want to wait for every single request. That's not fast enough. We’re going to wait forevery otherrequest.

This means that effectively in a second, or the amount of time it takes for a single request to process, we’re sending two requests instead. As a result, we should see our rate limit decreasing over time.

In our example, we're keeping track of the index of this loop using our edge parameter. On it we’re going to create a little conditionalifstatement.

Using a modulus function, we’re saying thatifouredgeis even or every otheredgeis equal to (==) zero (0), we want to wait for ourrawRequestto complete. If it's not (else), then we’re going to want to run it asynchronously. This means that we’re putting two requests in, but we’re only waiting for every other one.

Remember to save your new conditional statement, clear your terminal, and put it to work.

You can see that the API rate limit is increasing pretty slowly, right? But over time, if you have an endless list of products and let it run indefinitely, you’ll see it start to consume your API rate limit. However, we're still sitting around 500 to 568. This indicates that it's not as fast as it can be.

We can do better. We can make more requests.

Before doing so, we’re going to implement some best practices by associating our conditional statement to a variable in ourasync functionsection. We’re going to writeconst numberOfParallelRequests = 3, and paste it down into our conditional.

When you run it again, your rate limit should go down a lot faster. You should be getting into the 400s and lower. This means that my app is moving a lot faster, which is great. But it’s still not reaching its rate limit before it's done iterating those 100 products.

How do we make sure we’re running as close to the rate limit as possible?

Getting as close to your rate limit as possible: setting up your safety net

So far, we’ve achieved a bit of active optimization, but we can definitely do better. Our goal is to make ournumberOfParallelRequestsas high as possible without actually running into our rate limit by getting our available limit to zero.

The first thing we’re going to do is implement a safety net. This ensures that even if you do consume a lot of your API rate limit and it begins to approach zero, you’re not going to make a request when you’re running close to your rate limit.

In the following example I’ll show you how.

We're already capturing and returning our available rate limit in our logs, underconsole.log(There are ${extensions.cost.throttleStatus.currentlyAvailable} rate limit points available\n).

So we’re just going to tie thatlogto avariable, that way we can have it on hand to use.

In order to do that you’re going to create a newvariablein your main function. In this example, we’re going to set that new variable to zeroavailableRateLimit = 0, for now.

Then you’re going to set youravailableRateLimitvariable in both yourrawRequests' callback functions under the conditional. It’ll look like this:

Great! This gives us a way to keep track of how much we have left. Now we have to set our threshold.

The threshold prevents us from making requests when we’re below a set rate limit. We want to avoid going below zero and hitting those 429 responses.

In order to do that, we’re just going to define another variable. We’re going to call itrateLimitThreshold, and we’re going to set it to50. Now, 50 isn’t an arbitrary number. We can do some mental math here. We know that every mutation costs 10 query points and I'm doing about three of them at once. That would be 30 points used in the amount of time it takes to do one request. Therefore, 30 is less than 50. Given that the amount of time we’re waiting for requests is roughly about a second, this math should check out.

Now I expect that sometimes we’ll be off and it'll approach getting below 50, but that's when our business logic will protect us. We'll go over and implement that shortly.

Safeguarding with thresholds

So what is that business logic?

Now we want to take a look to see what's that value that's stored in myavailableRateLimitvariable. After I've made those requests, what do I have left? If that's less than myrateLimitThreshold, the acceptable threshold that we’ve defined for ourselves, then we want to tell our applications, "Wait a second. Hold on. We don't have room to keep making these requests indefinitely."

"The goal is to build good software, so that we can prevent making any requests that we know are going to hit our rate limit."

We’re doing this because we want to avoid hitting a bunch of 429s, which might actually make Shopify think we're a bot. The goal is to build good software, so that we can prevent making any requests that we know are going to hit a 429.

In order to do so we’re going to write in the below clause:

This is saying thatiftheavailableRateLimitis less than (<) therateLimitThreshold, we’re writing alogto wait. We want our app to wait. To do that in Node.js, we’re going to implement a new promise,await new Promise. Within this promise, we’ll pass in aresolveparameter, and we’ll define what takes place within the promise.

In this example, it's going to be a timeoutsetTimeout. For the timeout, we’ll pass in afunctionto define what happens once that time out is complete. In this case, we’re going to respond withresolve(‘Rate limit wait’). Then we’re going to insert anotherlog:console.lot(‘Done waiting - continuing requests’).

The last thing you want to provide into yoursetTimeout (function)is an integer for the timeout length. The quantity is milliseconds, so we’re going to pass in 1,000, which means that we're waiting one second in total.

You might also like:API Rate Limits and working with GraphQL.

This is our failsafe to ensure we never hit our rate limit! This conditional is now stating that if we ever have less than the acceptable 50 points available, instead of going through the entireloopagain, it’s going to wait one second, and then make those requests.

Before running that failsafe to see how it works in practice, I want you to change yourqueryto fetch249products, because 250 would put your allowance of 1,000 points per query.

Alright, go ahead and run it!

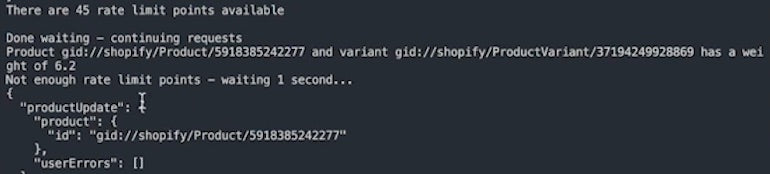

When you drop below 48, you should see alogthat looks something like this:

The above is alogsaying we hit our threshold, had to wait one second, and now we’re done and continuing our requests.

One thing to note is that when we are running these promises this way, there's a little bit of non-sequential output of thelogs. This is due to our synchronous and asynchronousrawRequests.

EachrawRequestexecutes itsthencallback immediately once it completes. That being said, the end result of ensuring we're not making more requests when we're below our threshold holds true. If you scroll through your terminal, you’ll see a lot of output, but no actual errors, which is great.

Running the final query

One thing I want to make clear is that implementing logic within your application is only one example of how to ensure you're optimizing your rate limit without going over. It's definitely not the only solution.

In a production app, you probably have a lot more available to you.

- You can implement a queuing model where you drop your request into a queue that shares resources between multiple threads.

- You could defer some execution and drop some

ids into a database that you have a cron job or scheduled job for. Later iterating over each record and making those requests at a regular cadence (eg. once a day, an hour, etc).

The method outlined above should give you a sense of how we understand what our rate limit availability is, and what you can do with it, such as implementing a failsafe for when things get too close.

Now, if we run this execution of this JS one more time, we see we have 50 rate limit points available. As we wait, our number increases again up to the hundreds, because every second we wait we get 50 more.

Each one of those lines is a product update, but I'm never concerned that it's going to hit my rate limit, it's always taken care of, and that's the goal. You want to ensure that you're optimizing and maximizing your APIs and not going over, while building a fast app that gets the job done for the merchants that you're developing for.

I hope you found this post educational and it helped you get a better understanding of what API rate limiting looks like, as well as the best practices for working with it.

建立对世界的企业家

Want to check out the other videos in this series before they are posted on the blog? Subscribe to the ShopifyDevs YouTube channel. Get development inspiration, useful tips, and practical takeaways.

Subscribe